Tag: chess

Thing 91: Chesslike Games Progress

Alright, small progress on chesslike.games. Today: added a /play url that lets you "create games", which are really just dummy things for now. Still lots more work to be done.

Thing 73: Chesslike Games Minor Theming Improvements

I made some minor theming improvements to chesslike.games.

Thing 72: Chesslike Games Sign In (for real this time)

I fixed yesterday's thing which was actually broken. Whoops.

Thing 71: Chesslike Games Sign In

I implemented sign in for chesslike.games. You can now sign up, sign in, and sign out but you can't do anything else yet.

Thing 70: Chesslike Games Sign Up

I think I implemented sign up for chesslike.games. You can sign up but you can't sign in yet. lol

Thing 68: Chesslike.games

I made a website: chesslike.games where I'll eventually add the ability to play the chess variants I've been working on online. Currently it's just a "coming soon" page.

Thing 67: Chess-Player (Part 8)

A work-in progress playable chess component from scratch.

Today's update: a very minor tweak to some of the hover states (now the piece hovered over doesn't get partially covered by the hover effect).

Next time: idk, i'll think of something

Thing 66: Chess-Player (Part 7)

A work-in progress playable chess component from scratch.

Today's update: a previous-move indicator.

Next time: idk, i'll think of something

Thing 65: Chess-Player (Part 6)

A work-in progress playable chess component from scratch.

Today's update: move history. Could definitely stand to have some better styling though.

Next time: idk, i'll think of something

Thing 64: Chess-Player (Part 5)

A work-in progress playable chess component from scratch.

Today's update: you can drag and drop pieces now! That was more effort than you'd think.

Next time: idk, i'll think of something

Thing 63: Chess-Player (Part 4)

A work-in progress playable chess component from scratch.

Today's update: move validation AND visuals for where the piece can move! No castling or en-passant yet, though, and pawns always promote to queens, since that's simpler for now. Also, you're allowed to leave your king in check and let it get taken. I'll figure out a good solution later for all these things.

Next time: maybe a little drag and drop action?

Thing 62: Chess-Player (Part 3)

A work-in progress playable chess component from scratch.

Today's update: you can teleport pieces on the board!

Next time: some move validation, I hope

Thing 61: Chess-Player (Part 2)

A work-in progress playable chess component from scratch.

Today's update: pieces on the board!

Next time: some interactivity, I hope

Thing 60: Generic Chess-Variant Player

I'm working on improving the UI of my implementation of Haft Schroedinger Chess. I'm trying to make a general-purpose chess-game-player that will work with this and many other future variants I might want to implement. Eventually, to allow online play as well (though, I'll need some new infrastructure to implement that).

Here's what I've got so far:

Yup, just an empty chessboard. Starting from scratch and takin' it slow.

Thing 59: A Cool Feature of Haft Schroedinger Chess

The Antumbra Station write-up on Haft Schroedinger Chess says that one of the consequences of the rules is that

You can't capture the Queen unless you capture a piece that is currently locked in as the Queen.

I demonstrate in this post that that claim is false!

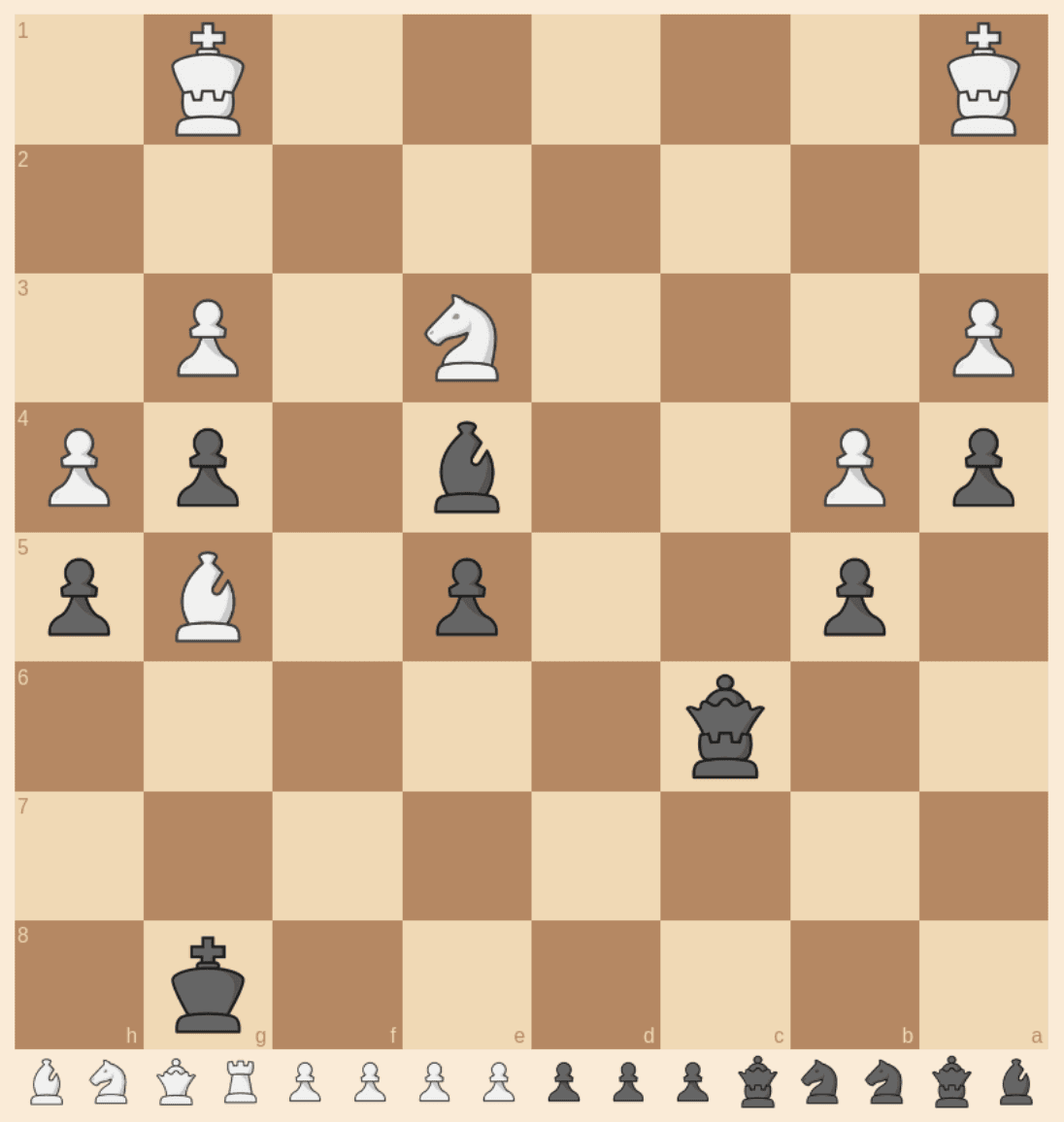

The following position came up when I was playing Haft Chess, and I noticed an interesting phenomenon.

In this position, if black moves their queenrook to c3, then white has the option to move their kingrook to b2 thereby forcing it to be a king, and since if the black queenrook were a queen, white's move would have been illegal, black's queenrook must collapse to just a rook.

Similarly, when a pawn promotes (to an indeterminate piece), a similar maneuver can be used by the other player to move their king into a square a rook's move away from the promoted pawn, thereby reducing the promoted piece to a mere bishop or knight.

Thing 58: Haft Schroedinger Chess, Now with Slightly Better UI

I added better piece art to my implementation of Haft Schroedinger Chess, and it now also shows which pieces have been taken. Also I think I fixed a bug where taking a pawn near an en-passantable position would sometimes kill the attacking pawn.

Thing 57: Making Hybrid Chess Pieces

I made some hybrid chess pieces which I'm going to use in the next version of my Haft Schroedinger Chess. These are based on the staunty chess pieces, created by sadsnake1 which are CC BY-NC-SA 4.0 licensed. As such, these are so licensed.

Thing 55: Haft Schroedinger Chess

As mentioned in thing 51, I've been working on a playable version of Haft Schroedinger Chess. I've come across a one other implementation here, but that implementation is not true to the full rules of the original description: it puts every piece including pawns into superposition at the start, whereas pawns are not supposed to be, and it also doesn't account for entanglements arising from checks. I made this implementation to solve those problems.

It tracks entanglements from checks invisibly though, since I was uncertain how to conveniently display them. I want to improve the UI quite a bit, but that will come in time. I also wanted to make art for each of the 31 valid piece-superpositions, but that will also come in time. I also want to eventually make it playable over the internet. If you notice any bugs, please reach out to me.

Thing 53: Reachable Chess Boards (Part 1)

Ok, so I've wondered for a while how many chess board states are valid. At first you might think: ok, plop down every piece in every spot and there you go. But, there are a few things to verify. I'm going to distinguish these into 3 categories: unplayable, playable but unreachable, and reachable.

First, the full set we're subdividing: each square on the board is either empty or has exactly one piece of the standard chess pieces on it. Technically, and this will be relevant later, we'll also include castling rights, and en-passantable pawns as part of a board state (and they will not allowed to be set to obviously false values). A board is "playable" if it satisfies 3 conditions: (1) there are no pawns in the first or eighth ranks, (2) there is exactly one king of each color, and (3) a king is not in check on the other color's turn. These are the boards you could pick up and reasonably play without inventing new rules for how to handle pawns or kings in those situations.

So, we now have the division between playable and unplayable boards. But how many boards that are playable, can be reached in theory from a valid sequence of moves in a chess game? Off the top of my head, there are a few reasons a board might be unreachable: (1) there are too many pieces on the board (either side has more than 16, or there are more than 9 queens or same-colored bishops, or more than 10 pieces of the same type, or more than 8 pawns), (2) too many pieces given the number of pawn captures needed (e.g. if white has doubled pawns and black still has all their pieces), (3) blocked-in pieces (e.g. pawns on b4 and d4 and white's dark-square bishop on a square other than c1 while also either still having all of their pawns so it can't have been promoted).

There are certainly more, that seem a bit harder to codify into straightforward rules. I'll leave it as an exercise for the reader, but here's an example if you want a spoiler.

!!SPOILER!! Unreachable board state !!SPOILER!!

FEN:rnbqkbQr/ppppp1pp/8/8/8/8/PPPPPP1P/RNBQKBNR b KQkq - 0 1

Anyway, I'm hoping to spend the next part of this sequence of blog posts discussing this in greater detail, and trying to define comprehensive combinatorial rules.

Thing 51: [WIP] Haft Schroedinger Chess

I started working on a playable version of Haft Schroedinger Chess. I was originally gonna try validate moves the easy brute-force way, but it's too computationally expensive. I'll have to keep working on it.

Thing 39: Deriving Point Values for Chess Pieces (Part 3)

In today's episode of deriving point values for chess pieces from endgame tablebases, we're gonna try to see how robust our numbers from before were. So, we'll try computing a few variants.

Variant 1: not starting with obviously promoted pieces (i.e. no triple knights, bishops or rooks or double queens on the same side.)

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0.00 | 0.00 | 5.00 | 4.83 |

| 2 | 3.02 | 1.47 | 2.41 | 5.00 | 9.39 |

| 3 | 2.22 | 1.93 | 2.48 | 5.00 | 9.20 |

| 4 | 1.96 | 2.20 | 2.67 | 5.00 | 9.22 |

| 5 | 1.76 | 2.30 | 2.79 | 5.00 | 9.26 |

Not too much interesting to see here, seems to generally match the previous numbers, with the only notable thing being that the queen trends up instead of down starting at 3 pieces.

Variant 2: a different loss function (sum of squares on un-sigmoided values)

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0.00 | 0.00 | 5.00 | 4.83 |

| 2 | 2.95 | 1.36 | 1.74 | 5.00 | 5.54 |

| 3 | 3.30 | 2.25 | 2.52 | 5.00 | 6.85 |

| 4 | 2.62 | 2.34 | 2.36 | 5.00 | 6.92 |

| 5 | 2.26 | 2.30 | 2.47 | 5.00 | 7.18 |

Oh boy, is this interesting? Seems like with this loss function the queen is worth waaaay less. But still trending upward. Pawns are also worth way more. Not sure what to think about that.

Variant 3: another different loss function (logarithm proper scoring rule)

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0.00 | 0.00 | 5.00 | 4.83 |

| 2 | 3.23 | 1.50 | 2.30 | 5.00 | 8.72 |

| 3 | 2.31 | 2.00 | 2.39 | 5.00 | 8.59 |

| 4 | 2.02 | 2.22 | 2.57 | 5.00 | 8.65 |

| 5 | 1.82 | 2.35 | 2.72 | 5.00 | 8.67 |

Very interesting, this one is way closer to the same as the original values I got, but with the queen just slightly lower in value.

Code below. Download stats.json from the lichess tablebase to run the code.

import fs from "node:fs";

const stats = JSON.parse(fs.readFileSync("stats.json", "utf-8"));

type Data = {

material: string;

gamePoints: number;

}[];

const getData = (options?: {

/**

* How much a cursed win counts as (cursed win = would be a win if not for the 50-move rule)

* Any value between 0 and 1 makes sense.

*/

curseFactor?: number;

nonKingPieceCount?: number;

tooManyPieces?: string[];

}): Data => {

const {

curseFactor = 0.25,

nonKingPieceCount = 5,

tooManyPieces = [],

} = options ?? {};

return Object.entries(stats)

.map(([key, e]: [string, any]) => {

const wins = e.histogram.white.wdl[2] + e.histogram.black.wdl[-2];

const cursedWins =

e.histogram.white.wdl[1] + e.histogram.black.wdl[-1];

const draws = e.histogram.white.wdl[0] + e.histogram.black.wdl[0];

const blessedLosses =

e.histogram.white.wdl[-1] + e.histogram.black.wdl[1];

const losses = e.histogram.white.wdl[-2] + e.histogram.black.wdl[2];

const total = wins + cursedWins + draws + blessedLosses + losses;

const gamePoints =

(wins +

curseFactor * cursedWins -

curseFactor * blessedLosses -

losses) /

total;

return {

material: key,

gamePoints,

};

})

.filter(

({ material }) =>

material.length === "KvK".length + nonKingPieceCount &&

tooManyPieces.every((substr) => !material.includes(substr)),

);

};

type Piece = "P" | "N" | "B" | "R" | "Q";

type Points = Record<Piece, number>;

type LossFunc = (goal: number, prediction: number) => number;

const sigmoid = (x: number) => Math.tanh(x);

const sum = (arr: number[]) => arr.reduce((a, b) => a + b, 0);

const predictGame = (points: Points, material: [string, string]) => {

const [mat1, mat2] = material;

return sigmoid(

sum([...mat1].map((p) => (p in points ? points[p as Piece] : 0))) -

sum([...mat2].map((p) => (p in points ? points[p as Piece] : 0))),

);

};

const getBaselineLoss = (data: Data, lossFunc: LossFunc) => {

let loss = 0;

for (const { gamePoints } of data) {

const prediction = 0;

loss += lossFunc(gamePoints, prediction);

}

return loss;

};

const computeLoss = (data: Data, points: Points, lossFunc: LossFunc) => {

let loss = 0;

for (const { material, gamePoints } of data) {

const prediction = predictGame(

points,

material.split("v") as [string, string],

);

loss += lossFunc(gamePoints, prediction);

}

return loss / getBaselineLoss(data, lossFunc);

};

const optimizeInRange = (

data: Data,

constraints: Record<Piece, { min: number; max: number; step: number }>,

lossFunc: LossFunc,

): Points => {

let bestLoss = Infinity;

let best: Points | null = null;

let i = 0;

for (

let p = constraints.P.min;

p <= constraints.P.max;

p += constraints.P.step

) {

for (

let n = constraints.N.min;

n <= constraints.N.max;

n += constraints.N.step

) {

for (

let b = constraints.B.min;

b <= constraints.B.max;

b += constraints.B.step

) {

for (

let r = constraints.R.min;

r <= constraints.R.max;

r += constraints.R.step

) {

for (

let q = constraints.Q.min;

q <= constraints.Q.max;

q += constraints.Q.step

) {

i += 1;

const pointValues = {

P: p,

N: n,

B: b,

R: r,

Q: q,

};

const loss = computeLoss(data, pointValues, lossFunc);

if (loss < bestLoss) {

best = pointValues;

bestLoss = loss;

}

}

}

}

}

}

return best as Points;

};

const nudge = (

data: Data,

points: Points,

epsilon: number,

lossFunc: LossFunc,

) => {

return optimizeInRange(

data,

Object.fromEntries(

Object.entries(points).map(([key, value]) => {

return [

key,

{

min: value - epsilon,

max: value + epsilon,

step: epsilon,

},

];

}),

) as any,

lossFunc,

);

};

const optimizeForEpsilon = (

data: Data,

initialPoints: Points,

epsilon: number,

lossFunc: LossFunc,

) => {

let prevLoss = 0;

let points = { ...initialPoints };

for (let i = 0; i < 1000; i += 1) {

points = nudge(data, points, epsilon, lossFunc);

const loss = computeLoss(data, points, lossFunc);

if (loss === prevLoss) {

break;

}

prevLoss = loss;

if (i > 998) {

console.log("TOOK TOO LONG");

}

}

return points;

};

const getPointValues = (

data: Data,

lossFunc: LossFunc,

initialValues = {

P: 0,

N: 0,

B: 0,

R: 0,

Q: 0,

},

) => {

let pointValues = initialValues;

pointValues = optimizeForEpsilon(data, pointValues, 1, lossFunc);

pointValues = optimizeForEpsilon(data, pointValues, 0.1, lossFunc);

pointValues = optimizeForEpsilon(data, pointValues, 0.01, lossFunc);

pointValues = optimizeForEpsilon(data, pointValues, 0.001, lossFunc);

return pointValues;

};

/**

* Normalize a set of points so that the rook is 5 (and queen will usually be around 9).

*/

const normalize = (points: Points) => {

const factor = 5 / points.R;

return {

P: points.P * factor,

N: points.N * factor,

B: points.B * factor,

R: points.R * factor,

Q: points.Q * factor,

};

};

const formatTable = (i: number, points: Points) => {

return `| ${points.P.toFixed(2)} | ${points.N.toFixed(2)} | ${points.B.toFixed(2)} | ${points.R.toFixed(2)} | ${points.Q.toFixed(2)} |`;

};

// Compute point values for pieces based on number of pieces on the board.

console.log("Variant 1: not starting with obviously promoted pieces");

for (let i = 1; i <= 5; i += 1) {

const lossFunc: LossFunc = (a, b) => Math.pow(a - b, 2);

const data = getData({

nonKingPieceCount: i,

tooManyPieces: ["BBB", "NNN", "RRR", "QQ"],

});

const pointValues = getPointValues(data, lossFunc);

console.log(`${i} non-king pieces`);

console.log(formatTable(i, normalize(pointValues)));

console.log("loss", computeLoss(data, pointValues, lossFunc));

}

console.log("Variant 2: different loss function");

for (let i = 1; i <= 5; i += 1) {

const lossFunc: LossFunc = (a, b) =>

Math.pow(Math.atanh(a) - Math.atanh(b), 2);

const data = getData({

nonKingPieceCount: i,

});

const pointValues = getPointValues(data, lossFunc);

console.log(`${i} non-king pieces`);

console.log(formatTable(i, normalize(pointValues)));

console.log("loss", computeLoss(data, pointValues, lossFunc));

}

console.log("Variant 3: another different loss function");

for (let i = 1; i <= 5; i += 1) {

const lossFunc: LossFunc = (a, b) =>

((a + 1) / 2) * Math.log((b + 1) / 2) +

(1 - (a + 1) / 2) * Math.log(1 - (b + 1) / 2);

const data = getData({

nonKingPieceCount: i,

});

const pointValues = getPointValues(data, lossFunc);

console.log(`${i} non-king pieces`);

console.log(formatTable(i, normalize(pointValues)));

console.log("loss", computeLoss(data, pointValues, lossFunc));

}

Thing 38: Deriving Point Values for Chess Pieces (Part 2)

Part 1: here.

In my previous iteration of this, I ended up giving values based on an average over all piece combinations in the endgame tablebase. I was curious what would happen if I subdivided it based on number of (non-king) pieces on the board. Here's the numbers I got today (rounded to 2 decimal places, after normalizing to R = 5):

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0 | 0 | 5 | 4.83 |

| 2 | 3.02 | 1.47 | 2.41 | 5 | 9.39 |

| 3 | 2.22 | 1.94 | 2.44 | 5 | 9.18 |

| 4 | 1.95 | 2.21 | 2.61 | 5 | 9.10 |

| 5 | 1.76 | 2.34 | 2.76 | 5 | 9.05 |

I've learned that the row based on a single non-king piece being on the board is almost entirely useless. The queen is worse than the rook because it's more likely to stalemate. The bishop and knight are worth literally zero because they can't checkmate on their own. The rest is interesting though.

We'll notice a few trends, though: as piece count goes up, pawn and queen values go down, and knight and bishop values go up. Those trends point in the direction of traditional point values for pieces. Very interesting. I want to try fitting a logistic curve to these numbers for rows 2-5 to try guessing what row 6 values will be, and then eventually when the 8 piece tablebase comes out (since they count kings as pieces), compare with what actually comes out.

This really makes you think that as you get closer to the endgame, the bishop and knight both get weaker relative to other pieces and the pawn gets stronger.

Code below. Download stats.json from the lichess tablebase to run the code.

import fs from "node:fs";

const stats = JSON.parse(fs.readFileSync("stats.json", "utf-8"));

type Data = {

material: string;

gamePoints: number;

}[];

const getData = (options?: {

/**

* How much a cursed win counts as (cursed win = would be a win if not for the 50-move rule)

* Any value between 0 and 1 makes sense.

*/

curseFactor?: number;

nonKingPieceCount?: number;

}): Data => {

const { curseFactor = 0.25, nonKingPieceCount = 5 } = options ?? {};

return Object.entries(stats)

.map(([key, e]: [string, any]) => {

const wins = e.histogram.white.wdl[2] + e.histogram.black.wdl[-2];

const cursedWins =

e.histogram.white.wdl[1] + e.histogram.black.wdl[-1];

const draws = e.histogram.white.wdl[0] + e.histogram.black.wdl[0];

const blessedLosses =

e.histogram.white.wdl[-1] + e.histogram.black.wdl[1];

const losses = e.histogram.white.wdl[-2] + e.histogram.black.wdl[2];

const total = wins + cursedWins + draws + blessedLosses + losses;

const gamePoints =

(wins +

curseFactor * cursedWins -

curseFactor * blessedLosses -

losses) /

total;

return {

material: key,

gamePoints,

};

})

.filter(

({ material }) =>

material.length === "KvK".length + nonKingPieceCount,

);

};

type Piece = "P" | "N" | "B" | "R" | "Q";

type Points = Record<Piece, number>;

const sigmoid = (x: number) => Math.tanh(x);

const sum = (arr: number[]) => arr.reduce((a, b) => a + b, 0);

const predictGame = (points: Points, material: [string, string]) => {

const [mat1, mat2] = material;

return sigmoid(

sum([...mat1].map((p) => (p in points ? points[p as Piece] : 0))) -

sum([...mat2].map((p) => (p in points ? points[p as Piece] : 0))),

);

};

const getBaselineLoss = (data: Data) => {

let loss = 0;

for (const { gamePoints } of data) {

const prediction = 0;

loss += Math.pow(gamePoints - prediction, 2);

}

return loss;

};

const computeLoss = (data: Data, points: Points) => {

let loss = 0;

for (const { material, gamePoints } of data) {

const prediction = predictGame(

points,

material.split("v") as [string, string],

);

loss += Math.pow(gamePoints - prediction, 2);

}

return loss / getBaselineLoss(data);

};

const optimizeInRange = (

data: Data,

constraints: Record<Piece, { min: number; max: number; step: number }>,

): Points => {

let bestLoss = Infinity;

let best: Points | null = null;

let i = 0;

for (

let p = constraints.P.min;

p <= constraints.P.max;

p += constraints.P.step

) {

for (

let n = constraints.N.min;

n <= constraints.N.max;

n += constraints.N.step

) {

for (

let b = constraints.B.min;

b <= constraints.B.max;

b += constraints.B.step

) {

for (

let r = constraints.R.min;

r <= constraints.R.max;

r += constraints.R.step

) {

for (

let q = constraints.Q.min;

q <= constraints.Q.max;

q += constraints.Q.step

) {

i += 1;

const pointValues = {

P: p,

N: n,

B: b,

R: r,

Q: q,

};

const loss = computeLoss(data, pointValues);

if (loss < bestLoss) {

best = pointValues;

bestLoss = loss;

}

}

}

}

}

}

return best as Points;

};

const nudge = (data: Data, points: Points, epsilon: number) => {

return optimizeInRange(

data,

Object.fromEntries(

Object.entries(points).map(([key, value]) => {

return [

key,

{

min: value - epsilon,

max: value + epsilon,

step: epsilon,

},

];

}),

) as any,

);

};

const optimizeForEpsilon = (

data: Data,

initialPoints: Points,

epsilon: number,

) => {

let prevLoss = 0;

let points = { ...initialPoints };

for (let i = 0; i < 1000; i += 1) {

points = nudge(data, points, epsilon);

const loss = computeLoss(data, points);

if (loss === prevLoss) {

break;

}

prevLoss = loss;

if (i > 998) {

console.log("TOOK TOO LONG");

}

}

return points;

};

const getPointValues = (

data: Data,

initialValues = {

P: 0,

N: 0,

B: 0,

R: 0,

Q: 0,

},

) => {

let pointValues = initialValues;

pointValues = optimizeForEpsilon(data, pointValues, 1);

pointValues = optimizeForEpsilon(data, pointValues, 0.1);

pointValues = optimizeForEpsilon(data, pointValues, 0.01);

pointValues = optimizeForEpsilon(data, pointValues, 0.001);

return pointValues;

};

/**

* Normalize a set of points so that the rook is 5 (and queen will usually be around 9).

*/

const normalize = (points: Points) => {

const factor = 5 / points.R;

return {

P: points.P * factor,

N: points.N * factor,

B: points.B * factor,

R: points.R * factor,

Q: points.Q * factor,

};

};

// Compute point values for pieces based on number of pieces on the board.

for (let i = 1; i <= 5; i += 1) {

const data = getData({ nonKingPieceCount: i });

const pointValues = getPointValues(data);

console.log(`${i} non-king pieces`);

console.log(normalize(pointValues));

console.log("loss", computeLoss(data, pointValues));

}

Thing 37: Deriving Point Values for Chess Pieces (Part 1)

I was curious to see if I could determine point values for chess pieces based on endgame tablebases. I use the model that the difference of the sum of the players' pieces should determine the expected value of the game to a given player via a sigmoid function. The end result is the following:

- pawn: 0.327

- knight: 0.403

- bishop: 0.479

- rook: 0.889

- queen: 1.610

The pawns are overrated here, because we use endgame tablebases and in endgames, pawns are much more likely to promote, and therefore more valuable. Queen to rook ratio is pretty close to 9:5, and bishops and knights are slightly weaker relatively than the normal point values associated with them. Here are the point values rescaled to make the rook be worth 5:

- pawn: 1.84

- knight: 2.27

- bishop: 2.69

- rook: 5.00

- queen: 9.06

Code below. Download stats.json from the lichess tablebase to run the code.

import fs from "node:fs";

const stats = JSON.parse(fs.readFileSync("stats.json", "utf-8"));

// How much a cursed win counts as (cursed win = would be a win if not for the 50-move rule)

const CURSE_FACTOR = 0.5;

const data = Object.entries(stats).map(([key, e]: [string, any]) => {

const wins = e.histogram.white.wdl[2] + e.histogram.black.wdl[-2];

const cursedWins = e.histogram.white.wdl[1] + e.histogram.black.wdl[-1];

const draws = e.histogram.white.wdl[0] + e.histogram.black.wdl[0];

const blessedLosses = e.histogram.white.wdl[-1] + e.histogram.black.wdl[1];

const losses = e.histogram.white.wdl[-2] + e.histogram.black.wdl[2];

const total = wins + cursedWins + draws + blessedLosses + losses;

const gamePoints =

(wins +

CURSE_FACTOR * cursedWins -

CURSE_FACTOR * blessedLosses -

losses) /

total;

return {

material: key,

gamePoints,

};

});

type Piece = "P" | "N" | "B" | "R" | "Q";

type Points = Record<Piece, number>;

const sigmoid = (x: number) => Math.tanh(x);

const sum = (arr: number[]) => arr.reduce((a, b) => a + b, 0);

const predictGame = (points: Points, material: [string, string]) => {

const [mat1, mat2] = material;

return sigmoid(

sum([...mat1].map((p) => (p in points ? points[p as Piece] : 0))) -

sum([...mat2].map((p) => (p in points ? points[p as Piece] : 0))),

);

};

const baselineLoss = (() => {

let loss = 0;

for (const { gamePoints } of data) {

const prediction = 0;

loss += Math.pow(gamePoints - prediction, 2);

}

return loss;

})();

console.log("BASELINE LOSS", baselineLoss);

const computeLoss = (points: Points) => {

let loss = 0;

for (const { material, gamePoints } of data) {

const prediction = predictGame(

points,

material.split("v") as [string, string],

);

loss += Math.pow(gamePoints - prediction, 2);

}

return loss;

};

const optimizeInRange = (

constraints: Record<Piece, { min: number; max: number; step: number }>,

): Points => {

let bestLoss = Infinity;

let best: Points | null = null;

let i = 0;

for (

let p = constraints.P.min;

p <= constraints.P.max;

p += constraints.P.step

) {

for (

let n = constraints.N.min;

n <= constraints.N.max;

n += constraints.N.step

) {

for (

let b = constraints.B.min;

b <= constraints.B.max;

b += constraints.B.step

) {

for (

let r = constraints.R.min;

r <= constraints.R.max;

r += constraints.R.step

) {

for (

let q = constraints.Q.min;

q <= constraints.Q.max;

q += constraints.Q.step

) {

i += 1;

const pointValues = {

P: p,

N: n,

B: b,

R: r,

Q: q,

};

const loss = computeLoss(pointValues);

if (loss < bestLoss) {

best = pointValues;

bestLoss = loss;

// console.log(i, bestLoss);

}

}

}

}

}

}

return best as Points;

};

const nudge = (points: Points, epsilon: number) => {

return optimizeInRange(

Object.fromEntries(

Object.entries(points).map(([key, value]) => {

return [

key,

{

min: value - epsilon,

max: value + epsilon,

step: epsilon,

},

];

}),

) as any,

);

};

const optimizeForEpsilon = (initialPoints: Points, epsilon: number) => {

let prevLoss = 0;

let points = { ...initialPoints };

for (let i = 0; i < 1000; i += 1) {

points = nudge(points, epsilon);

const loss = computeLoss(points);

if (loss === prevLoss) {

break;

}

prevLoss = loss;

console.log(i, loss);

if (i > 998) {

console.log("TOOK TOO LONG");

}

}

return points;

};

let pointValues = {

P: 0,

N: 0,

B: 0,

R: 0,

Q: 0,

};

pointValues = optimizeForEpsilon(pointValues, 1);

pointValues = optimizeForEpsilon(pointValues, 0.1);

pointValues = optimizeForEpsilon(pointValues, 0.01);

pointValues = optimizeForEpsilon(pointValues, 0.001);

console.log(pointValues);