Thing 48: Fall: A Pico 8 Game

Threw together a new tiny Pico-8 game. Fall down the endless abyss and collect coins to get a high score.

Thing 47: An Idea for Virtual Badges

I've been thinking about a fun idea recently for small digital collectibles I'm calling virtual badges. Each badge is a tiny 64x64 pixel image that contains a cryptographically verifiable certificate of ownership embedded directly inside it. This isn't some NFT scam; there's no marketplace or blockchain in sight. They're completely non-transferrable, so it can never become that. It's more like little signed virtual trophies. With an open standard, anyone can mint a badge for anyone else, and anyone can verify that it was genuinely issued and genuinely accepted all without even needing an internet connection.

Here's the idea: users who own badges and mints that mint badges use a public key as their identity. A badge will contain structured info such as the minter's public key, the owner's public key, a timestamp, and a hash of the image. The minter signs this data with their private key, producing a cryptographic signature that says, "I affirm that I issued this badge to this user". Then the user signs as well, proving they accept it. The final certificate will be bundled into the image file in metadata so the file alone will contain the badge info, the minter's signature, and the owner's signature. Verification is just signature checking and recomputing the hash of the image. No network calls. No global registry. No blockchain. No centralized authority.

If they want, users and mints can optionally include information like a display name or url. For the purpose of making providing your public key easier as a user, I imagine one or more websites that might let you host your public key at a url with a simple to remember alias for convenience.

The goal is for collecting these to feel like a game of hunting and earning badges. I'm trying to finalize the first version of this open standard and then I'm going to create the first mint. Stay tuned for more.

Thing 44: Luigi's Limerick

There once was a man named Luigi.

He was cleaning his house with a squeegie.

But his house was haunted;

A single Boo taunted.

It gave him the heebie-jeebies.

Thing 41: A Bad Drawing of some WcDonald's French Fries

A Bad Drawing of some WcDonald's French Fries.

Thing 39: Deriving Point Values for Chess Pieces (Part 3)

In today's episode of deriving point values for chess pieces from endgame tablebases, we're gonna try to see how robust our numbers from before were. So, we'll try computing a few variants.

Variant 1: not starting with obviously promoted pieces (i.e. no triple knights, bishops or rooks or double queens on the same side.)

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0.00 | 0.00 | 5.00 | 4.83 |

| 2 | 3.02 | 1.47 | 2.41 | 5.00 | 9.39 |

| 3 | 2.22 | 1.93 | 2.48 | 5.00 | 9.20 |

| 4 | 1.96 | 2.20 | 2.67 | 5.00 | 9.22 |

| 5 | 1.76 | 2.30 | 2.79 | 5.00 | 9.26 |

Not too much interesting to see here, seems to generally match the previous numbers, with the only notable thing being that the queen trends up instead of down starting at 3 pieces.

Variant 2: a different loss function (sum of squares on un-sigmoided values)

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0.00 | 0.00 | 5.00 | 4.83 |

| 2 | 2.95 | 1.36 | 1.74 | 5.00 | 5.54 |

| 3 | 3.30 | 2.25 | 2.52 | 5.00 | 6.85 |

| 4 | 2.62 | 2.34 | 2.36 | 5.00 | 6.92 |

| 5 | 2.26 | 2.30 | 2.47 | 5.00 | 7.18 |

Oh boy, is this interesting? Seems like with this loss function the queen is worth waaaay less. But still trending upward. Pawns are also worth way more. Not sure what to think about that.

Variant 3: another different loss function (logarithm proper scoring rule)

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0.00 | 0.00 | 5.00 | 4.83 |

| 2 | 3.23 | 1.50 | 2.30 | 5.00 | 8.72 |

| 3 | 2.31 | 2.00 | 2.39 | 5.00 | 8.59 |

| 4 | 2.02 | 2.22 | 2.57 | 5.00 | 8.65 |

| 5 | 1.82 | 2.35 | 2.72 | 5.00 | 8.67 |

Very interesting, this one is way closer to the same as the original values I got, but with the queen just slightly lower in value.

Code below. Download stats.json from the lichess tablebase to run the code.

import fs from "node:fs";

const stats = JSON.parse(fs.readFileSync("stats.json", "utf-8"));

type Data = {

material: string;

gamePoints: number;

}[];

const getData = (options?: {

/**

* How much a cursed win counts as (cursed win = would be a win if not for the 50-move rule)

* Any value between 0 and 1 makes sense.

*/

curseFactor?: number;

nonKingPieceCount?: number;

tooManyPieces?: string[];

}): Data => {

const {

curseFactor = 0.25,

nonKingPieceCount = 5,

tooManyPieces = [],

} = options ?? {};

return Object.entries(stats)

.map(([key, e]: [string, any]) => {

const wins = e.histogram.white.wdl[2] + e.histogram.black.wdl[-2];

const cursedWins =

e.histogram.white.wdl[1] + e.histogram.black.wdl[-1];

const draws = e.histogram.white.wdl[0] + e.histogram.black.wdl[0];

const blessedLosses =

e.histogram.white.wdl[-1] + e.histogram.black.wdl[1];

const losses = e.histogram.white.wdl[-2] + e.histogram.black.wdl[2];

const total = wins + cursedWins + draws + blessedLosses + losses;

const gamePoints =

(wins +

curseFactor * cursedWins -

curseFactor * blessedLosses -

losses) /

total;

return {

material: key,

gamePoints,

};

})

.filter(

({ material }) =>

material.length === "KvK".length + nonKingPieceCount &&

tooManyPieces.every((substr) => !material.includes(substr)),

);

};

type Piece = "P" | "N" | "B" | "R" | "Q";

type Points = Record<Piece, number>;

type LossFunc = (goal: number, prediction: number) => number;

const sigmoid = (x: number) => Math.tanh(x);

const sum = (arr: number[]) => arr.reduce((a, b) => a + b, 0);

const predictGame = (points: Points, material: [string, string]) => {

const [mat1, mat2] = material;

return sigmoid(

sum([...mat1].map((p) => (p in points ? points[p as Piece] : 0))) -

sum([...mat2].map((p) => (p in points ? points[p as Piece] : 0))),

);

};

const getBaselineLoss = (data: Data, lossFunc: LossFunc) => {

let loss = 0;

for (const { gamePoints } of data) {

const prediction = 0;

loss += lossFunc(gamePoints, prediction);

}

return loss;

};

const computeLoss = (data: Data, points: Points, lossFunc: LossFunc) => {

let loss = 0;

for (const { material, gamePoints } of data) {

const prediction = predictGame(

points,

material.split("v") as [string, string],

);

loss += lossFunc(gamePoints, prediction);

}

return loss / getBaselineLoss(data, lossFunc);

};

const optimizeInRange = (

data: Data,

constraints: Record<Piece, { min: number; max: number; step: number }>,

lossFunc: LossFunc,

): Points => {

let bestLoss = Infinity;

let best: Points | null = null;

let i = 0;

for (

let p = constraints.P.min;

p <= constraints.P.max;

p += constraints.P.step

) {

for (

let n = constraints.N.min;

n <= constraints.N.max;

n += constraints.N.step

) {

for (

let b = constraints.B.min;

b <= constraints.B.max;

b += constraints.B.step

) {

for (

let r = constraints.R.min;

r <= constraints.R.max;

r += constraints.R.step

) {

for (

let q = constraints.Q.min;

q <= constraints.Q.max;

q += constraints.Q.step

) {

i += 1;

const pointValues = {

P: p,

N: n,

B: b,

R: r,

Q: q,

};

const loss = computeLoss(data, pointValues, lossFunc);

if (loss < bestLoss) {

best = pointValues;

bestLoss = loss;

}

}

}

}

}

}

return best as Points;

};

const nudge = (

data: Data,

points: Points,

epsilon: number,

lossFunc: LossFunc,

) => {

return optimizeInRange(

data,

Object.fromEntries(

Object.entries(points).map(([key, value]) => {

return [

key,

{

min: value - epsilon,

max: value + epsilon,

step: epsilon,

},

];

}),

) as any,

lossFunc,

);

};

const optimizeForEpsilon = (

data: Data,

initialPoints: Points,

epsilon: number,

lossFunc: LossFunc,

) => {

let prevLoss = 0;

let points = { ...initialPoints };

for (let i = 0; i < 1000; i += 1) {

points = nudge(data, points, epsilon, lossFunc);

const loss = computeLoss(data, points, lossFunc);

if (loss === prevLoss) {

break;

}

prevLoss = loss;

if (i > 998) {

console.log("TOOK TOO LONG");

}

}

return points;

};

const getPointValues = (

data: Data,

lossFunc: LossFunc,

initialValues = {

P: 0,

N: 0,

B: 0,

R: 0,

Q: 0,

},

) => {

let pointValues = initialValues;

pointValues = optimizeForEpsilon(data, pointValues, 1, lossFunc);

pointValues = optimizeForEpsilon(data, pointValues, 0.1, lossFunc);

pointValues = optimizeForEpsilon(data, pointValues, 0.01, lossFunc);

pointValues = optimizeForEpsilon(data, pointValues, 0.001, lossFunc);

return pointValues;

};

/**

* Normalize a set of points so that the rook is 5 (and queen will usually be around 9).

*/

const normalize = (points: Points) => {

const factor = 5 / points.R;

return {

P: points.P * factor,

N: points.N * factor,

B: points.B * factor,

R: points.R * factor,

Q: points.Q * factor,

};

};

const formatTable = (i: number, points: Points) => {

return `| ${points.P.toFixed(2)} | ${points.N.toFixed(2)} | ${points.B.toFixed(2)} | ${points.R.toFixed(2)} | ${points.Q.toFixed(2)} |`;

};

// Compute point values for pieces based on number of pieces on the board.

console.log("Variant 1: not starting with obviously promoted pieces");

for (let i = 1; i <= 5; i += 1) {

const lossFunc: LossFunc = (a, b) => Math.pow(a - b, 2);

const data = getData({

nonKingPieceCount: i,

tooManyPieces: ["BBB", "NNN", "RRR", "QQ"],

});

const pointValues = getPointValues(data, lossFunc);

console.log(`${i} non-king pieces`);

console.log(formatTable(i, normalize(pointValues)));

console.log("loss", computeLoss(data, pointValues, lossFunc));

}

console.log("Variant 2: different loss function");

for (let i = 1; i <= 5; i += 1) {

const lossFunc: LossFunc = (a, b) =>

Math.pow(Math.atanh(a) - Math.atanh(b), 2);

const data = getData({

nonKingPieceCount: i,

});

const pointValues = getPointValues(data, lossFunc);

console.log(`${i} non-king pieces`);

console.log(formatTable(i, normalize(pointValues)));

console.log("loss", computeLoss(data, pointValues, lossFunc));

}

console.log("Variant 3: another different loss function");

for (let i = 1; i <= 5; i += 1) {

const lossFunc: LossFunc = (a, b) =>

((a + 1) / 2) * Math.log((b + 1) / 2) +

(1 - (a + 1) / 2) * Math.log(1 - (b + 1) / 2);

const data = getData({

nonKingPieceCount: i,

});

const pointValues = getPointValues(data, lossFunc);

console.log(`${i} non-king pieces`);

console.log(formatTable(i, normalize(pointValues)));

console.log("loss", computeLoss(data, pointValues, lossFunc));

}

Thing 38: Deriving Point Values for Chess Pieces (Part 2)

Part 1: here.

In my previous iteration of this, I ended up giving values based on an average over all piece combinations in the endgame tablebase. I was curious what would happen if I subdivided it based on number of (non-king) pieces on the board. Here's the numbers I got today (rounded to 2 decimal places, after normalizing to R = 5):

| # pieces | P | N | B | R | Q |

|---|---|---|---|---|---|

| 1 | 2.29 | 0 | 0 | 5 | 4.83 |

| 2 | 3.02 | 1.47 | 2.41 | 5 | 9.39 |

| 3 | 2.22 | 1.94 | 2.44 | 5 | 9.18 |

| 4 | 1.95 | 2.21 | 2.61 | 5 | 9.10 |

| 5 | 1.76 | 2.34 | 2.76 | 5 | 9.05 |

I've learned that the row based on a single non-king piece being on the board is almost entirely useless. The queen is worse than the rook because it's more likely to stalemate. The bishop and knight are worth literally zero because they can't checkmate on their own. The rest is interesting though.

We'll notice a few trends, though: as piece count goes up, pawn and queen values go down, and knight and bishop values go up. Those trends point in the direction of traditional point values for pieces. Very interesting. I want to try fitting a logistic curve to these numbers for rows 2-5 to try guessing what row 6 values will be, and then eventually when the 8 piece tablebase comes out (since they count kings as pieces), compare with what actually comes out.

This really makes you think that as you get closer to the endgame, the bishop and knight both get weaker relative to other pieces and the pawn gets stronger.

Code below. Download stats.json from the lichess tablebase to run the code.

import fs from "node:fs";

const stats = JSON.parse(fs.readFileSync("stats.json", "utf-8"));

type Data = {

material: string;

gamePoints: number;

}[];

const getData = (options?: {

/**

* How much a cursed win counts as (cursed win = would be a win if not for the 50-move rule)

* Any value between 0 and 1 makes sense.

*/

curseFactor?: number;

nonKingPieceCount?: number;

}): Data => {

const { curseFactor = 0.25, nonKingPieceCount = 5 } = options ?? {};

return Object.entries(stats)

.map(([key, e]: [string, any]) => {

const wins = e.histogram.white.wdl[2] + e.histogram.black.wdl[-2];

const cursedWins =

e.histogram.white.wdl[1] + e.histogram.black.wdl[-1];

const draws = e.histogram.white.wdl[0] + e.histogram.black.wdl[0];

const blessedLosses =

e.histogram.white.wdl[-1] + e.histogram.black.wdl[1];

const losses = e.histogram.white.wdl[-2] + e.histogram.black.wdl[2];

const total = wins + cursedWins + draws + blessedLosses + losses;

const gamePoints =

(wins +

curseFactor * cursedWins -

curseFactor * blessedLosses -

losses) /

total;

return {

material: key,

gamePoints,

};

})

.filter(

({ material }) =>

material.length === "KvK".length + nonKingPieceCount,

);

};

type Piece = "P" | "N" | "B" | "R" | "Q";

type Points = Record<Piece, number>;

const sigmoid = (x: number) => Math.tanh(x);

const sum = (arr: number[]) => arr.reduce((a, b) => a + b, 0);

const predictGame = (points: Points, material: [string, string]) => {

const [mat1, mat2] = material;

return sigmoid(

sum([...mat1].map((p) => (p in points ? points[p as Piece] : 0))) -

sum([...mat2].map((p) => (p in points ? points[p as Piece] : 0))),

);

};

const getBaselineLoss = (data: Data) => {

let loss = 0;

for (const { gamePoints } of data) {

const prediction = 0;

loss += Math.pow(gamePoints - prediction, 2);

}

return loss;

};

const computeLoss = (data: Data, points: Points) => {

let loss = 0;

for (const { material, gamePoints } of data) {

const prediction = predictGame(

points,

material.split("v") as [string, string],

);

loss += Math.pow(gamePoints - prediction, 2);

}

return loss / getBaselineLoss(data);

};

const optimizeInRange = (

data: Data,

constraints: Record<Piece, { min: number; max: number; step: number }>,

): Points => {

let bestLoss = Infinity;

let best: Points | null = null;

let i = 0;

for (

let p = constraints.P.min;

p <= constraints.P.max;

p += constraints.P.step

) {

for (

let n = constraints.N.min;

n <= constraints.N.max;

n += constraints.N.step

) {

for (

let b = constraints.B.min;

b <= constraints.B.max;

b += constraints.B.step

) {

for (

let r = constraints.R.min;

r <= constraints.R.max;

r += constraints.R.step

) {

for (

let q = constraints.Q.min;

q <= constraints.Q.max;

q += constraints.Q.step

) {

i += 1;

const pointValues = {

P: p,

N: n,

B: b,

R: r,

Q: q,

};

const loss = computeLoss(data, pointValues);

if (loss < bestLoss) {

best = pointValues;

bestLoss = loss;

}

}

}

}

}

}

return best as Points;

};

const nudge = (data: Data, points: Points, epsilon: number) => {

return optimizeInRange(

data,

Object.fromEntries(

Object.entries(points).map(([key, value]) => {

return [

key,

{

min: value - epsilon,

max: value + epsilon,

step: epsilon,

},

];

}),

) as any,

);

};

const optimizeForEpsilon = (

data: Data,

initialPoints: Points,

epsilon: number,

) => {

let prevLoss = 0;

let points = { ...initialPoints };

for (let i = 0; i < 1000; i += 1) {

points = nudge(data, points, epsilon);

const loss = computeLoss(data, points);

if (loss === prevLoss) {

break;

}

prevLoss = loss;

if (i > 998) {

console.log("TOOK TOO LONG");

}

}

return points;

};

const getPointValues = (

data: Data,

initialValues = {

P: 0,

N: 0,

B: 0,

R: 0,

Q: 0,

},

) => {

let pointValues = initialValues;

pointValues = optimizeForEpsilon(data, pointValues, 1);

pointValues = optimizeForEpsilon(data, pointValues, 0.1);

pointValues = optimizeForEpsilon(data, pointValues, 0.01);

pointValues = optimizeForEpsilon(data, pointValues, 0.001);

return pointValues;

};

/**

* Normalize a set of points so that the rook is 5 (and queen will usually be around 9).

*/

const normalize = (points: Points) => {

const factor = 5 / points.R;

return {

P: points.P * factor,

N: points.N * factor,

B: points.B * factor,

R: points.R * factor,

Q: points.Q * factor,

};

};

// Compute point values for pieces based on number of pieces on the board.

for (let i = 1; i <= 5; i += 1) {

const data = getData({ nonKingPieceCount: i });

const pointValues = getPointValues(data);

console.log(`${i} non-king pieces`);

console.log(normalize(pointValues));

console.log("loss", computeLoss(data, pointValues));

}

Thing 37: Deriving Point Values for Chess Pieces (Part 1)

I was curious to see if I could determine point values for chess pieces based on endgame tablebases. I use the model that the difference of the sum of the players' pieces should determine the expected value of the game to a given player via a sigmoid function. The end result is the following:

- pawn: 0.327

- knight: 0.403

- bishop: 0.479

- rook: 0.889

- queen: 1.610

The pawns are overrated here, because we use endgame tablebases and in endgames, pawns are much more likely to promote, and therefore more valuable. Queen to rook ratio is pretty close to 9:5, and bishops and knights are slightly weaker relatively than the normal point values associated with them. Here are the point values rescaled to make the rook be worth 5:

- pawn: 1.84

- knight: 2.27

- bishop: 2.69

- rook: 5.00

- queen: 9.06

Code below. Download stats.json from the lichess tablebase to run the code.

import fs from "node:fs";

const stats = JSON.parse(fs.readFileSync("stats.json", "utf-8"));

// How much a cursed win counts as (cursed win = would be a win if not for the 50-move rule)

const CURSE_FACTOR = 0.5;

const data = Object.entries(stats).map(([key, e]: [string, any]) => {

const wins = e.histogram.white.wdl[2] + e.histogram.black.wdl[-2];

const cursedWins = e.histogram.white.wdl[1] + e.histogram.black.wdl[-1];

const draws = e.histogram.white.wdl[0] + e.histogram.black.wdl[0];

const blessedLosses = e.histogram.white.wdl[-1] + e.histogram.black.wdl[1];

const losses = e.histogram.white.wdl[-2] + e.histogram.black.wdl[2];

const total = wins + cursedWins + draws + blessedLosses + losses;

const gamePoints =

(wins +

CURSE_FACTOR * cursedWins -

CURSE_FACTOR * blessedLosses -

losses) /

total;

return {

material: key,

gamePoints,

};

});

type Piece = "P" | "N" | "B" | "R" | "Q";

type Points = Record<Piece, number>;

const sigmoid = (x: number) => Math.tanh(x);

const sum = (arr: number[]) => arr.reduce((a, b) => a + b, 0);

const predictGame = (points: Points, material: [string, string]) => {

const [mat1, mat2] = material;

return sigmoid(

sum([...mat1].map((p) => (p in points ? points[p as Piece] : 0))) -

sum([...mat2].map((p) => (p in points ? points[p as Piece] : 0))),

);

};

const baselineLoss = (() => {

let loss = 0;

for (const { gamePoints } of data) {

const prediction = 0;

loss += Math.pow(gamePoints - prediction, 2);

}

return loss;

})();

console.log("BASELINE LOSS", baselineLoss);

const computeLoss = (points: Points) => {

let loss = 0;

for (const { material, gamePoints } of data) {

const prediction = predictGame(

points,

material.split("v") as [string, string],

);

loss += Math.pow(gamePoints - prediction, 2);

}

return loss;

};

const optimizeInRange = (

constraints: Record<Piece, { min: number; max: number; step: number }>,

): Points => {

let bestLoss = Infinity;

let best: Points | null = null;

let i = 0;

for (

let p = constraints.P.min;

p <= constraints.P.max;

p += constraints.P.step

) {

for (

let n = constraints.N.min;

n <= constraints.N.max;

n += constraints.N.step

) {

for (

let b = constraints.B.min;

b <= constraints.B.max;

b += constraints.B.step

) {

for (

let r = constraints.R.min;

r <= constraints.R.max;

r += constraints.R.step

) {

for (

let q = constraints.Q.min;

q <= constraints.Q.max;

q += constraints.Q.step

) {

i += 1;

const pointValues = {

P: p,

N: n,

B: b,

R: r,

Q: q,

};

const loss = computeLoss(pointValues);

if (loss < bestLoss) {

best = pointValues;

bestLoss = loss;

// console.log(i, bestLoss);

}

}

}

}

}

}

return best as Points;

};

const nudge = (points: Points, epsilon: number) => {

return optimizeInRange(

Object.fromEntries(

Object.entries(points).map(([key, value]) => {

return [

key,

{

min: value - epsilon,

max: value + epsilon,

step: epsilon,

},

];

}),

) as any,

);

};

const optimizeForEpsilon = (initialPoints: Points, epsilon: number) => {

let prevLoss = 0;

let points = { ...initialPoints };

for (let i = 0; i < 1000; i += 1) {

points = nudge(points, epsilon);

const loss = computeLoss(points);

if (loss === prevLoss) {

break;

}

prevLoss = loss;

console.log(i, loss);

if (i > 998) {

console.log("TOOK TOO LONG");

}

}

return points;

};

let pointValues = {

P: 0,

N: 0,

B: 0,

R: 0,

Q: 0,

};

pointValues = optimizeForEpsilon(pointValues, 1);

pointValues = optimizeForEpsilon(pointValues, 0.1);

pointValues = optimizeForEpsilon(pointValues, 0.01);

pointValues = optimizeForEpsilon(pointValues, 0.001);

console.log(pointValues);

Thing 34: Designing a Programming Language for Funsies (Part 5)

Previously: Part 1, Part 2, Part 3, Part 4.

Didn't get to do anything today, so this is just gonna be an open question. One of the other goals I'm curious to try for this language is to have every value be serializable. I'm not sure how this'll work for functions, and especially continuations. Most of the other tricky ones can be expressed in terms of functions. Functions may be able to be expressible as their definition together with their environment, so maybe that'll work. Continuations seem much trickier. Still gotta think on that.

Thing 32: Designing a Programming Language for Funsies (Part 4)

Previously: Part 1, Part 2, Part 3.

I added a primitive parser with support for strings and added symbols for true, false, null, undefined.

Toy implementation at https://github.com/athingperday/schwelle-lang.

Thing 31: Designing a Programming Language for Funsies (Part 3)

Turns out that we need one more "aspect" to a type. Not just eval, which tells you how to evaluate a value of the given type, but in order for expressions to work, we need some kind of application aspect. Which I'll call "call". I'll also call an expression a "pair" more in line with other lisps.

I've decided to code-name the language Schwelle (the German word for "threshold"), and I've created a toy implementation at https://github.com/athingperday/schwelle-lang.

Thing 30: Designing a Programming Language for Funsies (Part 2)

In Designing a Programming Language for Funsies (Part 1), I talked about going extreme. Well, my goal is to make the core of the language so small and stupid-easy to implement, and there can be a whole standard library or whatever that's written in the language itself so that it's as portable as possible. I'm gonna walk you through my thought process.

We start with the basic premise of the language. At any given step in the computation, you have a "frame". A frame contains the following information:

- evaluand: a thing to be evaluated

- environment: an environment in which to evaluate it

- continuation: directions on what to do with the result of the evaluation

The premise of this language is to have first-class frame-manipulation. This comes in the form of what I call xframes (or transframes for long -- since it transforms a frame -- a portmanteau -- it's clever -- get it?).

Let's talk about types. It'd be cool if types could be defined independently of the evaluation apparatus above. All that's really needed is for a type to be able to specify how it should be evaluated. Almost like each type is a "plugin" to the language itself. Most types (like numbers or strings) just store data and don't actually need to be evaluated. The ones that matter are going to be types like an expression, or a symbol. I'm yet undecided on whether the unevaluatable things should all evaluate to an "error" type if they ever end up trying to be directly evaluated, or whether they should evaluate to themselves.

We'll also want types themselves to be first-class, but we'll worry about that later.

Regardless, where we end up following that line of thought, is that types carry the data of how they should be evaluated. So, roughly speaking, the language in which we're implementating our language will represent a type as an object with an "eval" function. The evaluation apparatus then will call the evaluand's type's eval function on the current frame, and output a new frame, which will then be the next frame to be evaluated. And that's the whole of the evaluation apparatus!

Next time, I'll give some sample code.

Thing 29: Designing a Programming Language for Funsies (Part 1)

I've had in my head for a while an idea for a programming language inspired by John Shutt's Kernel. But, I go even further than merely first-class macros and environments. I give you: first-class (well, there really isn't a word for this, so I guess for now I'll call them) transframes (or xframes for short). Basically, first-class operators that can modify the evaluation-stack.

I've done some work on this in the past, making an interpreter and such, but I'll be rebuilding it, so I can document my thought process as I go. It's gonna be inefficient as heck, but I'm curious to see where is leads.

Thing 24: Epoch Ranking

So, the most common way to assign numbers to players of a skill-based game to try to capture their skill level is with an Elo system. And I think mathematically the way it works makes a lot of sense.

But I want to try to design my own ranking system. It'll probably turn out worse than Elo's. I have a specific thing I want to try out though. I want to try to be able to ask of a game: how many tiers of domination are there between the best in the world at the game and the worst? In other words, what's the longest chain of players such that each player wins basically 100% of games against the next in the chain?

Elo's system can kind of answer this by looking at the spread between the top player's rating and the lowest. But, you kind of have to decide for yourself what win rate counts as "dominating". You have to pick a percentage (strictly less than 100) at which you consider it dominating to determine what a "tier" is for the purposes of my question. So, my system's goal is to not be arbitrary in the definition of "tier". Or as I'll call them, "epoch"s.

Okay, so let's say we give every player a ranking. We want this to mean something about their skill. So, let's say, like with Elo's system, the ratings should roughly predict the probability of one player beating another. My solution is to take the most childish approach, which is way less mathematically sound than Elo's. Given two player's ratings , the probability that player 1 wins is (clamped between 0 and 1).

Basically: if players have equal scores, they have equal chances of winning. If a player's score is more than 1 point higher than the other's, then the first player should win 100% of the time. Interpolate linearly. If it's half a point higher, then it's halfway in between (75%). And so on.

This way, we get our system declaring literal probabilities of 1, which shouldn't exist, but we need to exist for the purpose of this experiment. This then lets us answer the question of how many epochs of skill there are in the game. By just taking the difference between the top rated player and the bottom.

In a future post, I'll discuss how this system might work in some real world examples, and how it compares to Elo's. I'll also discuss how to go about actually assigning ratings to players.

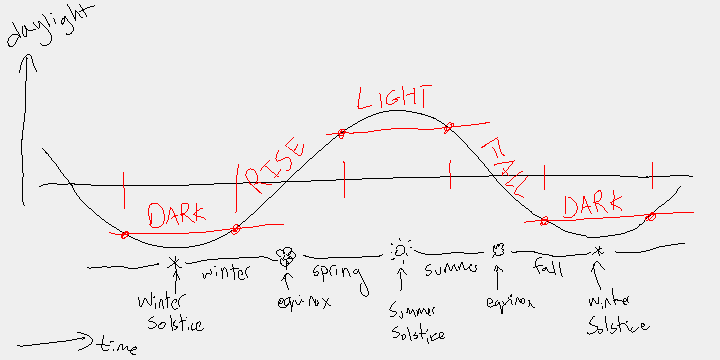

Thing 23: A Change of Seasons (aka 'Happy Rise Season')

We often think of Summer as being the hot season and where the days are longest. And Winter as the cold season where days are shortest. But, in reality the longest day of the year is at the boundary of Spring and Summer. And the shortest day is at the boundary between Autumn and Winter. Something something seasonal lag. Anyway...

I propose a new way of dividing the year into seasons. One where the longest day is in the middle of Light season, and the shortest is in the middle of Dark season. Then the remaining seasons can be called Rise season and Fall season since the amount of daylight rises from Dark season to Light season and falls from Light season to Dark season. In this way, each season is more clearly distinct from the others, and start a half-season offset from the traditional seasons.

In picture form:

Would you look at that? Today (Feb 3, 2026) is the start of Rise season!

Thing 22: Some Number Facts

I like collecting facts about natural numbers where the number is an answer to a natural-ish question. Here are two such facts:

5 is the number of Euclidean solids.

14 is the largest number of distinct sets obtainable by repeatedly applying the set operations of closure and complement to a given starting subset of a topological space.

Thing 21: Some 'Alien' Scribbles

Some random scribbles that look like they might be from an alien language.

Thing 20: DriftKart Prototype

A prototype for a drifting mechanic in a racing video game. You need to plug in a game controller to be able to play. Using a GameCube Controller with an Adapter, the relevant buttons are A to drive and R to drift and the left stick to steer. Different controllers might have different button mappings. May only work on Chromium-based browsers.

Thing 18: Build/Produce Toy Model (Part 1)

Okay, so today's thing is more like a blog post in format than an image or interactive thing. Much of this was worked out around a decade ago, but I'm re-working it out as I'm finally getting around to writing it up now.

Basically, I was thinking about a player's progression through building up resources in a board game, and I came up with a toy model that oversimplifies a lot of things (that's what being a toy model is, after all). The model goes like this: imagine a 1-player game where your goal is to maximize your total number of widgets; on every turn, you have a single choice to make between two options: (1) build a widget-factory, or (2) have each widget factory you own produce one widget. The toy model doesn't account for when the game ends. If you know that the game is going to end after some specified number of turns, then it's pretty trivial to solve for the right answer: spend the first half of your turns building and the second half producing. The proof is left as an exercise to the reader.

Now, how do we want to handle the more interesting case where you don't know when the game ends? Well, perhaps the most obvious way is to pick some probability , and say each turn with probability the game ends. You could pick a specific or just use as a variable in your calculations. This isn't what I decided to do. I decided to handle it differently. I decided to make the game infinite instead. My question became: what strategy creates the fastest-growing number of widgets as a function of time? In other words, what sequence of moves gets you the best asymptotic behavior? This is the core question I aim to answer.

Okay, let's start by getting an upper bound. At time/turn , the most widgets you could have by then (as determined above) is to build for the first half and produce for the second half. No real strategy could obviously achieve this for every , but if one could, they would see that at time , they have widgets. So that's our upper bound.

Now, let's pick some obvious strategies and see what they gets us, just to get a feel for the problem. Obviously the two trivial strategies of build every turn or produce every turn get us zero widgets. But, we could build once and then produce every turn thereafter. At turn That gets us widgets. We can do better by building twice and then producing every turn. Or better yet, building times and then producing every turn. That gets us . Still not great. Not even quadratic.

Let's try the next most obvious thing: diagonalizing. That is, let's alternate build and produce every turn. At turn (let's just assume is even for simplicity), we have . Wow! This is only slightly worse than half of the unattainably optimal upper bound!

(End of part 1. To be continued...)

Thing 17: Fake Constellation Generator

I made a random fake constellation generator. You can specify any string as a seed, a number of stars, a threshold for connections, and a method for connections. Out you get a constellation. I think (toilet, 35, 2.5, min) looks pretty cool. I also like (gggg, 75, 3, multiply), (bl, 64, 9, add), and (ohio, 51, 4, max). Play around; see what you can make.

Thing 16: Probability Converter

I made a converter between different ways of thinking about probabilities. It allows conversion between probabilities, odds, and log odds measured in bits, logits, and decibels. The ui is pretty terrible, sorry.

Thing 15: Bongard Problem 3

My third bongard problem. Well, really it's the fourth I made, but wanted to push an easier one earlier in the lineup.

Thing 14: Spectrogram Player v2

Yesterday, I tried to make a spectrogram-player that plays a spectrogram. See thing 13 It was pretty bad. Today I tried a second approach I learned of after making the thing yesterday. This time I use white noise and bandpass filters. I think it's much better than yesterday's. Feel free to compare using the same images in both.

Thing 13: Spectrogram Player

I tried to make a spectrogram-player that plays a spectrogram. It's pretty bad. Below are two hand-drawn spectrograms you can download and upload to try it on. You can also upload any image, but if the image doesn't look like this, then your ears may bleed even more.

The way it does this is it makes a pure sine wave for each row in the image and alters the volume according to the brightness throughout. I learned after making this there are better ways of doing this such as using a noise source with band pass filters. Oh well. Also, no idea how well this would work on an actual spectrogram. Will just have to wait until I make a spectrogram-maker I guess.

Thing 12: Blood Type Calculator: Better Rh Inference

Continuation of thing 9.

Better rh factor inference. It can now infer e.g. that if a child is rh positive, and one parent is rh negative, the other parent must be rh positive.

| # | Name | Parent1's Name | Parent2's Name | ABO | Rh | Actions |

|---|---|---|---|---|---|---|

| No rows yet. | ||||||

Thing 9: Blood Type Calculator

I made a blood type calculator that deduces what some family members' blood types must be given the blood types of other people in the family. It's not perfect at inferring all possible information yet, but it can infer some interesting things.

Put in some family members whose blood types you know, and see if it can correctly deduce someone's blood type! The more family members you add (including grandparents and cousins, etc.), the more likely it will be to narrow it down more.

For example, try adding in Alice with blood type A and Bob with blood type B and give them a kid. See how the kid could still be any blood type. Now give Alice and Bob each two parents with blood types AB. Now the kid can only be blood type AB.

Future improvements I want to make: (1) infer that if one parent is rh negative and the child is rh positive, the other parent must be rh positive, (2) output probabilities based on bayesian reasoning, instead of just which ones are possible.

Disclaimer: the UI was partially written by an LLM, but the main focus of this post was on the logic of the calculation, which was entirely written by me.

| # | Name | Parent1's Name | Parent2's Name | ABO | Rh | Actions |

|---|---|---|---|---|---|---|

| No rows yet. | ||||||

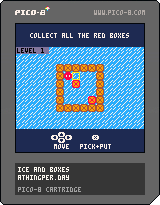

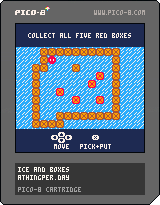

Thing 7: Ice and Boxes Puzzle Game New Levels

I made two new levels for the Ice and Boxes puzzle game.

Thing 6: My First Bongard Problem

I made this about a year or two ago, but today's thing is a Bongard Problem. I have a backlog of around a dozen of these (some better than others), but this was the first one I made, and I think one of my better (and harder) ones.

Thing 5: Make Your Own Smiley

It's a simple make-your-own-smiley toy.

Blush:

Sweat:

Thing 3: Pico Player

I made a reusable modular React component for playing PICO-8 games (@athingperday/react-pico-player). Here's an example of it being used to play yesterday's puzzle:

Thing 2: Ice and Boxes Puzzle

Today's thing is a puzzle I made with (PICO-8).

To play, download the image below, and load it up in PICO-8.

Thing 1: athingper.day Website

Hi! I'm athingperday and I will post one thing every day. Could be something big. Could be something small. But every day will be something. Today's thing is that I made a website (athingper.day) to hold all my things.